Detection System of Record

What is a Detection System of Record?

A Detection System of Record (DSoR) is a vendor-neutral governance layer that continuously maps threat intelligence to detection coverage, measures detection effectiveness, and governs detection health across the full threat-to-detection operating loop.

For the cross-industry “system of record” pattern and category narrative, start with What is a Detection System of Record? (explainer). This page is the product and operating-model hub.

SecuMap is a Detection System of Record (DSoR) — a vendor-neutral governance layer that continuously maps threat intelligence to detection coverage, measures detection effectiveness, and governs detection health across the full threat-to-detection operating loop.

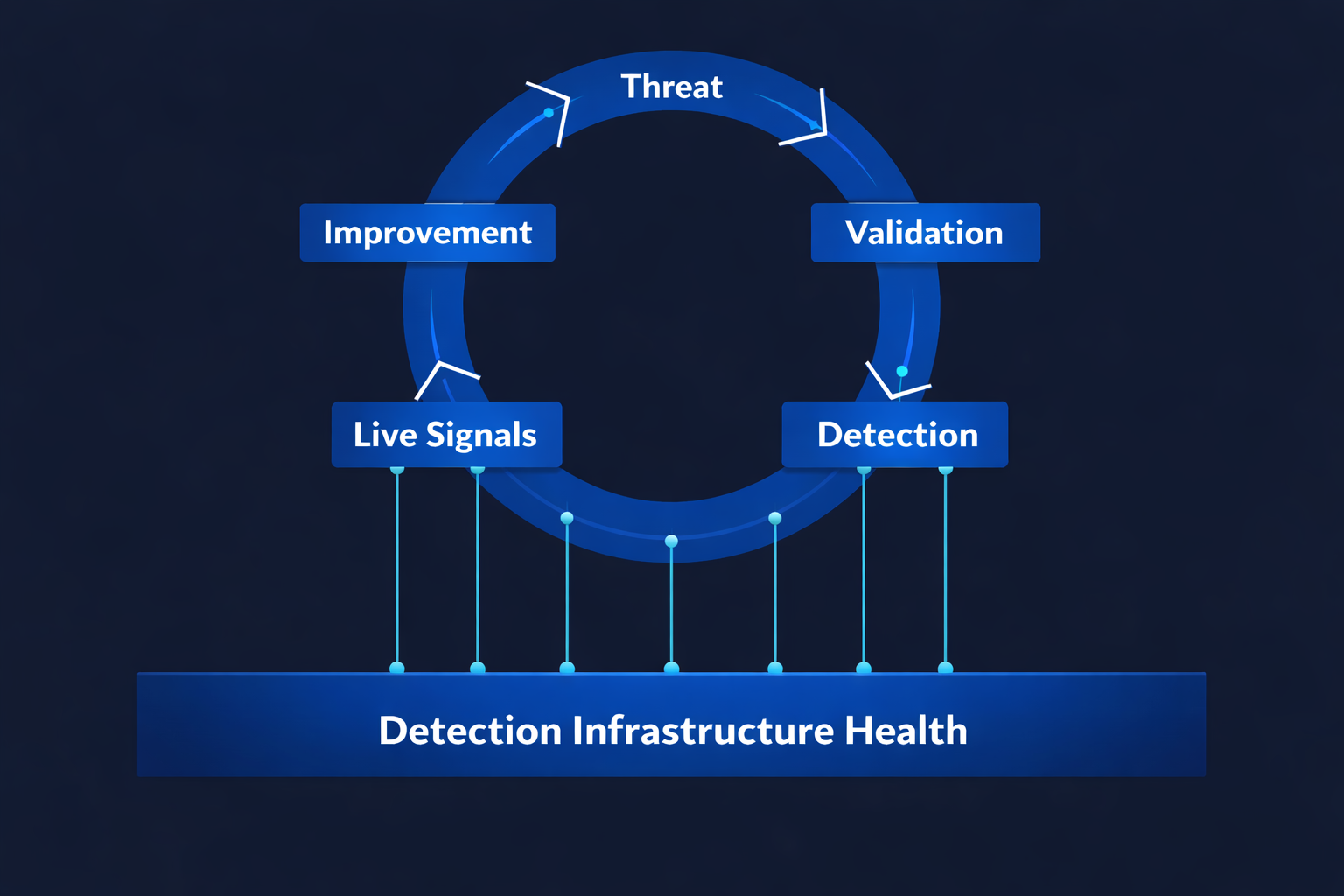

It governs detection effectiveness across the continuous threat-to-detection operating loop — from initial threat identification through validation, live operation, and structured improvement.

Operating above SIEM, EDR, BAS, and CTI systems, it does not execute detections. It defines how detection effectiveness is measured, validated, and improved across them.

Security tools execute. A Detection System of Record governs detection health.

Modern detection requires aligning multiple sources to a single threat model—and proving effectiveness through live detection signals.

SecuMap maps security tools, detections, threat intelligence, and validation activity to MITRE ATT&CK — creating a unified, continuously updated view of detection coverage.

Detection performance is then measured through live detection signals — as rules trigger in production — enabling continuous validation, gap identification, and prioritised improvement.

This creates a unified ATT&CK-aligned detection model grounded in real production signal.

Why current security models still fail the governance test

Modern security operations rely on specialised systems: SIEM stores and searches logs. EDR produces alerts. BAS validates controls. CTI provides intelligence. Detection engineering builds rules.

What does not exist in most environments is a persistent governance layer that connects these systems into a single operational model — one that unifies threat intelligence, detection logic, incident outcomes, and validation results.

Without that layer, detection coverage is a belief, infrastructure health is invisible, and detection effectiveness is a slide title — not an auditable capability. Leadership asks whether priority threats are detectable today, and the honest answer is often “probably,” backed by static maps instead of a governed record.

Example: detection without governance (what actually breaks)

A threat team publishes a new campaign. Detection engineering files issues to tune rules. BAS shows a “pass” on a test bundle. The SOC is underwater on false positives. Six weeks later, nobody can show which deployed logic still maps to that campaign, which validation is current, and whether incident outcomes matched the assumed coverage. That is not a people failure; it is a missing system of record for detection as a function.

The same pattern repeats: rotating vendors, re-platforming, org changes, and M&A all silently invalidate yesterday’s “coverage” story because no one artefact links intent, implementation, validation, and live evidence.

With a Detection System of Record

With a DSoR, the programme holds a persistent record: what the organisation intends to cover, who owns the use case, which logic is deploy-relevant, what has been validated, and what the SOC and incidents prove in production. Improvement becomes a loop with owners and evidence — not a quarterly re-do of the same heatmap in a new template.

This is how governed operating cadence becomes possible without replacing your SIEM or BAS — SecuMap sits above the stack and unifies the lifecycle.

The diagram above shows the model. The steps below show how it operates in practice.

SecuMap continuously connects these layers using live detection signals — creating an always up-to-date view of detection coverage.

The Full Threat-to-Detection Operating Loop

SecuMap continuously links threats, detections, incidents, and validation into a single, always up-to-date ATT&CK-aligned system of record.

A Detection System of Record governs detection effectiveness across a continuous operating loop — not a linear workflow.

Improvement feeds back into threat understanding, restarting the loop. The Detection System of Record governs this entire cycle — providing persistent, system-level visibility across decision, implementation, live operation, and refinement.

- Threat — A threat is identified through intelligence, adversary behaviour, or emerging risk.

- Validation — The organisation validates its current posture using BAS or simulation to determine whether detection or prevention capability already exists.

- Detection — Where gaps are confirmed, detection logic is engineered and implemented across relevant tools.

- Live signals — Detection effectiveness is measured through live detection signals — as rules trigger in production — providing continuous validation of what actually works.

- Improvement — Detection logic is refined, tuned, matured — or decommissioned — based on validation, operational evidence, and what live signals show is working in production.

The Foundational Layer: Detection Infrastructure Health

Detection effectiveness is not determined solely by detection logic. It is dependent on the operational health of the underlying security and telemetry infrastructure.

SIEM pipelines, endpoint agents, log ingestion flows, data normalisation, rule execution engines, and validation tooling are typically monitored in isolation — by different teams, using different metrics.

Yet detection performance depends directly on their stability, integrity, and reliability.

If telemetry is incomplete, if agents are degraded, if ingestion pipelines drop events, or if rule execution is impaired, detection effectiveness deteriorates — even when detection logic appears correct on paper.

This foundational dependency is rarely governed as part of the detection operating model. Start with the category definition on detection infrastructure health (structural); for narrative depth, see the blog: the hidden variable.

A Detection System of Record extends governance beneath the operating loop to incorporate infrastructure health as a first-class input into detection effectiveness.

Infrastructure reliability, telemetry integrity, and platform drift are continuously assessed in context of detection performance — not as separate operational metrics, but as determinants of detection health.

Detection logic cannot be healthy if the infrastructure that executes it is not.

In this model, detection health cannot be considered in isolation from the systems that enable it.

A Governance Layer — Not Another Execution Tool

A Detection System of Record operates at the architectural layer above security tools. It does not execute detections. It governs how detection effectiveness is measured, validated, and improved across them.

Modern security operations are composed of specialised systems, each optimised for a specific domain:

- SIEM — aggregate telemetry, execute detection logic, generate alerts

- EDR — endpoint behaviour, prevention controls

- BAS — simulate adversary techniques, validate controls

- CTI — adversary intelligence and context

- Detection engineering — author and deploy detection logic

Each of these systems executes within its own domain. None provides persistent, system-level governance across them.

A Detection System of Record introduces that missing layer. It unifies threat intelligence, detection logic, validation outcomes, live operational signals, and improvement cycles within a single operational model — governing detection effectiveness across tools rather than within a single tool.

Execution remains in SIEM, EDR, and enforcement platforms. Validation remains in BAS. Intelligence remains in CTI. The Detection System of Record governs how these domains align, measure performance, and improve over time.

Category Boundaries

A Detection System of Record is distinct from adjacent security domains. It does not compete with them; it governs detection effectiveness across them.

| Adjacent domain | Primary focus | What a DSoR is not |

|---|---|---|

| SIEM | Telemetry ingestion and alert generation | Not a log store or alert console — it governs effectiveness across execution layers. |

| EDR | Endpoint detection and prevention | Not an endpoint agent — it traces detection health across tools. |

| BAS | Control validation through simulation | Not a simulator — it links validation outcomes to detection lifecycle state. |

| CTI | Adversary intelligence | Not an intel feed — it maps intel to coverage and effectiveness. |

| Detection engineering IDE | Rule authoring and deployment | Not an editor — it governs lifecycle and health of deployed logic. |

| Exposure / CTEM | Exploitable risk prioritisation | Not exposure scoring — it governs detection as a managed capability. |

Those systems execute, simulate, prioritise, or respond. A Detection System of Record governs how detection effectiveness is persistently measured, linked across the operating loop, and continuously improved.

The Operating Model for Detection as a Managed Capability

A Detection System of Record does more than connect tools. It defines the operating model by which detection is treated as a governed, measurable capability within security operations.

In many organisations, detection exists as distributed logic embedded in SIEM rules, endpoint controls, and monitoring playbooks. Ownership is fragmented. Validation is periodic. Performance is inferred from incident response rather than measured as a capability with defined health.

The DSoR introduces structural accountability. Detection capability is baselined. Validation results are linked to specific detection logic. Infrastructure health is incorporated into performance measurement. Live signal quality becomes observable. Maturity progression and drift are tracked over time.

Detection effectiveness becomes a governed system capability — not a by-product of tooling.

In this model, security operations move from reactive monitoring to managed detection health across the continuous operating loop.

Why It Matters

Modern security architectures have execution systems, validation systems, and exposure prioritisation frameworks. The Detection System of Record introduces a governance layer that connects them into a coherent operating model.

Without a Detection System of Record, coverage is assumed, validation is episodic, and detection effectiveness cannot be persistently governed.

With a DSoR, detection effectiveness becomes a governed system capability — persistently measured, traceable across the operating loop, and continuously improved.

Governance model: cadence, ownership, and forums

A DSoR only works if the operating model assigns clear ownership: who maintains the threat-to-detection map, who approves lifecycle transitions, who consumes BAS and purple-team results, and who closes the loop when incidents contradict assumed coverage. Cadence should be weekly for hot priorities and monthly for portfolio health — with an explicit artefact that is the record, not a slide deck snapshot.

SecuMap is designed so those roles work from the same governed objects: use cases, mapped techniques, validation state, and production signals. That reduces “meeting theatre” and makes governance something teams can execute, not just present.

If you need an executive-facing walkthrough of what that looks like in practice, use request a briefing after you have reviewed the category explainer.

Evidence and auditability (what you can show under scrutiny)

Audit and leadership scrutiny increasingly ask for evidence, not assurances. A DSoR should let you show: the threat basis for a use case, the validation window and result, the deployed configuration context, the alert and incident history, and the correction taken when something drifted. That is the bar for “governed” — and it is different from exporting a static ATT&CK image once a quarter.

See SecuMap in the interactive demo to understand how those evidence threads appear in one place while execution remains in your existing tools.

Continue the model with focused guides

Use these focused pages to move from category definition into implementation priorities for your team.

- XDR vs Detection System of Record (unified signals vs programme)

- EDR vs Detection System of Record (endpoint vs programme)

- SOAR vs Detection System of Record (response vs governance)

- SIEM vs Detection System of Record (execution vs governance)

- What is MITRE ATT&CK? (threat model)

- What is MaGMa? (detection use case framework)

- What is a Detection System of Record? (category explainer)

- Detection coverage: map, validate, and close confidence gaps

- Detection infrastructure health: what can work in production

- Measure detection effectiveness with operational evidence

- Detection engineering platform model for lifecycle governance

- Threat-informed defense operating model

- See the SecuMap workflow in the interactive demo

- Request an executive briefing for rollout planning